The Feature Flag That Taught Me Three Hard Lessons

Table of Contents

- The Setup

- Case 1: The Zombie Flag

- Case 2: The Name Collision Nightmare

- Case 3: The Flag Matrix — When Testing Becomes a Nightmare

- Looking Back

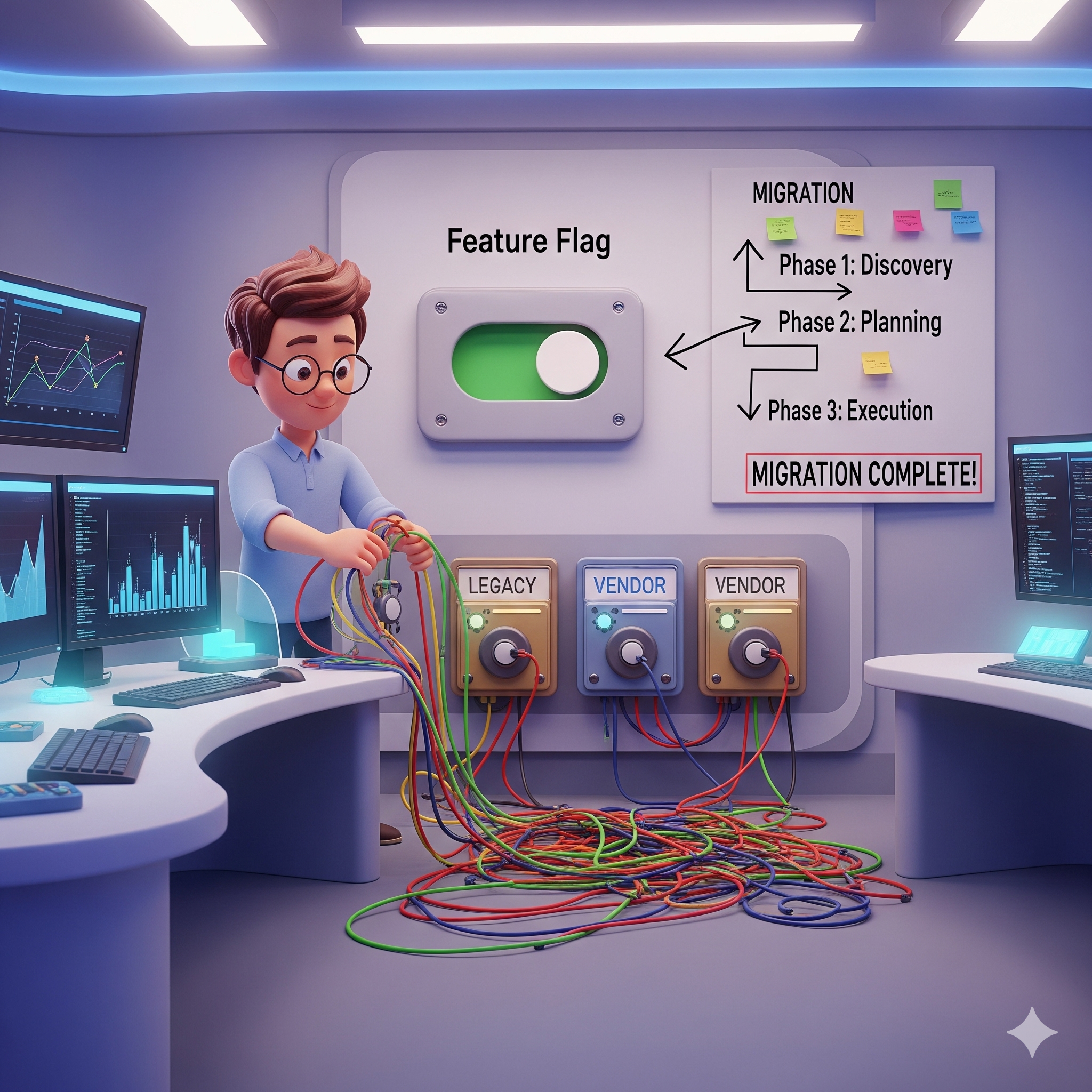

Feature flags feel like magic when you first discover them. You can toggle behavior in production without a new deployment, roll back instantly when something breaks, and run experiments safely. I was no different — I loved them. Until I didn’t.

This is the story of a migration from our legacy in-house file management system to a third-party vendor solution. It started clean, gradually got complicated, and left me untangling a mess that took weeks to resolve. Three problems, each one sneakier than the last.

The Setup

Our in-house file management system had served us well, but it was aging. We decided to migrate to a third-party vendor — better scalability, built-in compliance, and reduced maintenance overhead. The migration couldn’t happen overnight, so I introduced a feature flag to control the switchover gracefully. The idea was simple: if the flag is on, route traffic to the vendor system; if it’s off, fall back to the legacy one.

I flipped the flag, monitored the rollout carefully, and the migration completed without a single incident. It felt like a textbook success.

Then I got busy. The cleanup could wait a few days.

Those “few days” became a few months.

Case 1: The Zombie Flag

A zombie flag is a flag that has outlived its purpose but continues to walk among the living — and worse, the living start relying on it.

While my migration flag was sitting around uncleaned, another team noticed it. The name sounded relevant to what they were building — ui box preview feature that was also part of the vendor rollout. Without checking with anyone, they went ahead and gated their own logic behind the same flag. It was a reasonable assumption on their part. The flag name clearly pointed to the vendor system, and their feature was part of the vendor system. What they didn’t know was that my flag was always meant to be temporary scaffolding, not a long-term control mechanism.

When I finally went to delete the flag and remove the old code, I discovered it had quietly become the backbone of three different migrations of other features for the same purpose. Removing it now required cross-team coordination, regression testing, and a lot of stressful back-and-forth that nobody had budgeted time for.

The lesson: A flag without a clear owner and an expiry plan is a liability the moment it’s created. Treat cleanup as part of the original task, not an afterthought. The day the migration succeeds is the day the flag removal should go onto the backlog — with a hard deadline. Communicate that deadline clearly to the entire engineering team, and follow up on it relentlessly. If a flag is still around after its purpose is served, it’s not just clutter — it’s a ticking time bomb for future confusion and technical debt.

Case 2: The Name Collision Nightmare

Even if no one had reused my flag, we still had a second problem quietly brewing: similar flag names scattered across different systems.

Because the file management migration was a company-wide initiative, multiple teams introduced their own flags independently. The result was a cluster of names that looked nearly identical — one for the backend file service, one for the proxy routing layer and two for the UI. Each name was a slight variation of the same theme. Here are some example flag names for different migration contexts:

alts_us_box_migration_backendalts_box_migration_backendalts_box_migration_proxyalts_box_ui_migrationalts_abs_box_migration

Each flag name includes the migration context (alts, us, box, abs), the layer it controls, and is specific enough to avoid confusion.

QA struggled to determine which flag combination would enable the required functionality for end-to-end testing. If the wrong combination was toggled, something would inevitably break, and it was difficult to pinpoint the cause. During a late-night debugging session, we had to go through the codebase to understand how each flag was used and what combination would actually enable the desired functionality. It took hours to diagnose, and the confusion stemmed from the complexity and ambiguity of the flag setup.

The lesson: Flag names need to be specific enough that no one could reasonably confuse them. Include the team name, the layer it controls, and the context — even if the result feels overly verbose. A long, unambiguous flag name is always better than a short one that causes a incident at midnight because someone toggled the wrong flag. If you find yourself needing to explain the difference between two flags in a conversation, that’s a sign your naming isn’t clear enough. The goal is to make it impossible for someone to toggle the wrong flag without realizing it.

Case 3: The Flag Matrix — When Testing Becomes a Nightmare

This was the worst of the three, and it’s the one I think about most.

Because the migration touched multiple layers — the UI file picker, the proxy routing layer, and the backend storage service — each team naturally introduced their own flag for their slice of the work. On paper, that seemed reasonable. In practice, it meant the real behavior of the entire system was now controlled by a combination of flags, not a single one.

With just three flags, each able to be on or off independently, you end up with eight possible system states. But only a handful of those combinations were actually valid. The rest produced subtle failures — files uploading successfully on the backend but returning broken preview URLs on the UI, or the proxy routing requests to the vendor while the backend was still writing to legacy storage. These weren’t loud crashes. They were silent data inconsistencies that slipped through because nobody had thought to test the partial migration states.

Nobody owned the interaction between the flags. Each team tested their own flag in isolation and considered it done. The bugs only appeared at the boundaries, and those boundaries belonged to no one.

The lesson: When a single migration spans multiple layers, it should be controlled by a single shared migration state — not independent flags per team. That state should have clearly defined values like “legacy,” “canary,” “vendor,” and “rollback,” and one team should own it. Testing four well-understood states is manageable. Testing six unpredictable flag combinations is not. If you find yourself needing to test multiple flags together to understand the system behavior, that’s a sign you need to consolidate them into a single migration state. The complexity of testing grows exponentially with each additional flag, so it’s crucial to keep the number of independent flags as low as possible when they control related functionality.

Looking Back

Migrating from our in-house file system to a third-party vendor was the right technical decision. But the way I managed the feature flag around it created unnecessary pain that lasted long after the migration itself was over.

Here is what I would do differently:

- Plan the cleanup before writing the flag — the deletion date should exist before the flag is ever deployed

- Use specific, namespaced flag names that communicate ownership, layer, and scope clearly, even at the cost of being verbose

- Treat multi-layer migrations as a single coordinated unit — one migration state, one owner, not independent flags per team

- Communicate proactively — a single message in a shared engineering channel saying “this flag is temporary and will be removed next sprint” would have prevented most of the confusion

- Search the codebase thoroughly before cleanup — flag names can spread faster than you expect, and assumptions are dangerous

Feature flags are powerful tools, but they come with hidden costs in code complexity, testing surface area, and team communication overhead. The longer they live beyond their purpose, the more expensive they become.

The next time I introduce a flag, the very first thing I will plan is how to get rid of it.